How can we make autonomous vehicles safer? This is a question that engineers and researcher struggle to answer. But now, thanks to an experiment by Stanford University, an answer might be on the horizon.

The results of the experiment come from the latest edition of the Science Robotics academic journal. In the paper, it explains how a research team is using neural networks to improve how autonomous vehicles see the world — particularly how they see the world in an emergency situation.

So what exactly is a neural network? In short, it’s a form of artificial intelligence. While that in itself is nothing new, the way it learns is pretty slick. The AI takes data and adds it to an ever-growing database. Then when it’s presented with a situation it can make a decision based on past experiences.

It’s similar to how the human brain functions, just on a less complex level.

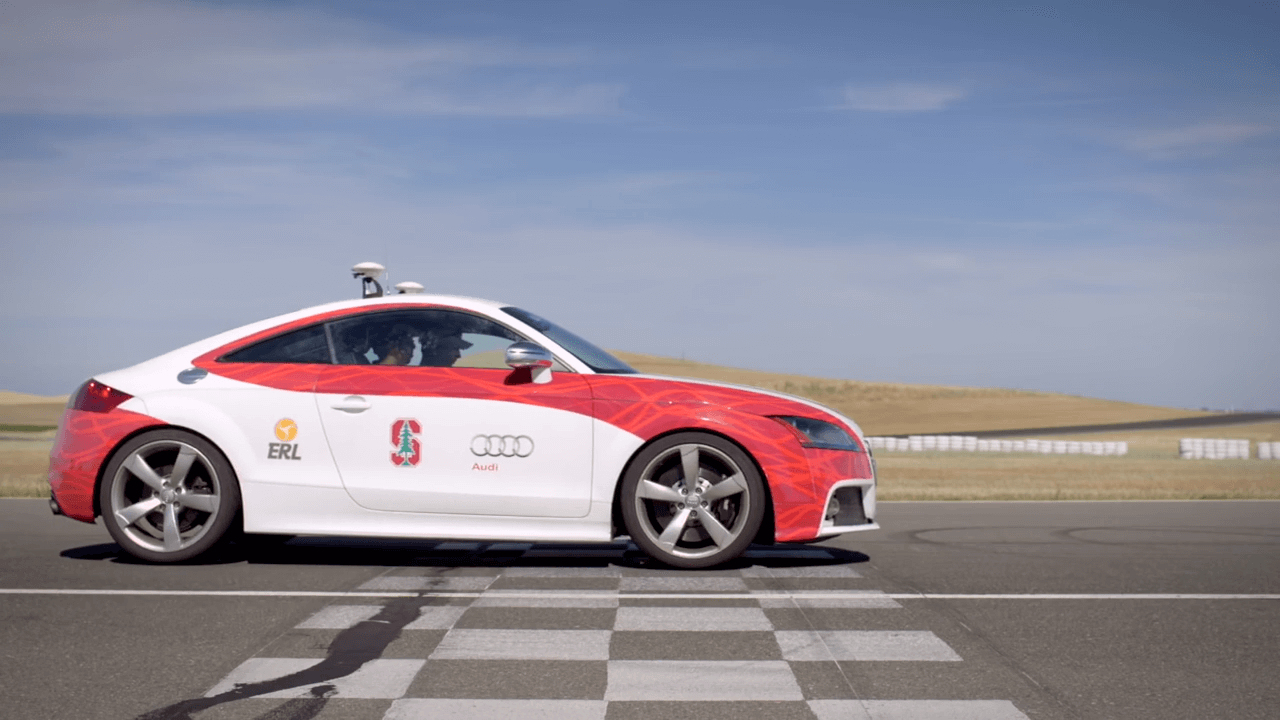

To demonstrate this new program, a research team at Stanford headed out to Thunderhill Raceway in Willow, California. Once there the team rolled out its specially designed Audi TT and VW GTI autonomous vehicles.

It then broke the experiment out into two parts. The first would rely on a physics-based program, while the second would use the neural network.

To kick off the first part, the team took the physics-based program and input several variables. This included things like track conditions and data about the car. Then, the team loaded the program into the Audi TT then let it take to the track.

Using the first five turns of the East Course, the TT ran 10 laps in autonomous mode. Each run was timed and recorded with a dSPACE MicroAutoBox II.

Then to provide a control, the team handed the car over to an amateur racing driver. While the paper did not name the driver, it did say he was part of the research team and a regular at Thunderhill.

The human driver drove the same short course as the autonomous Audi. Like the previous runs, the data was recorded and compiled.

Finally, after both series of runs were over, the team then compared the data. As they expected the outcomes were fairly similar. This is due to both the human and computer having all the information up front.

While this is all well and good, the team realized that when an autonomous vehicle takes to the road, it won’t have those variables. This is where the second neural network program comes into play.

Using 200,000 data points, including those captured during testing near the Arctic Circle, the neural network is pretty robust. However, one thing it doesn’t know is anything about Thunderhill Raceway. This made it the perfect place to see how it performed in an unknown environment.

The team loaded the neural network into the GTI and then let it run the course. Surprisingly, it managed to drive the course in a similar manner despite not knowing any of the data like the Audi.

So what does this prove? Well, the Stanford teams hope it will improve the way an autonomous car thinks when road surfaces have variable levels of grip.

For example, say it’s raining and an autonomous vehicle encounters a situation where it needs to change lanes quickly. Regular programming can’t account for all the different surfaces and could over- or under-correct. With the neural network though, the program could draw on past experience and make a more informed decision.

In theory, this could provide a safer outcome for both the passengers and whatever the car is attempting to avoid.

There’s still a long way to go before this is commonplace in vehicles, but it’s a step in the right direction to make safer cars. Although if the team needs any more help, maybe it could talk to Dr. Yamauchi again.

If you want to see the Audi TT in action from a few years back, you can check out the video below.

See more articles on Autonomous Tech.