Polyphony Digital is developing a new rendering system for Gran Turismo that uses neural networks to determine which objects in a scene need to be drawn, and the early results suggest it could meaningfully improve performance on PlayStation 5.

The system, called “NeuralPVS”, was detailed in a technical presentation at the Computer Entertainment Developers Conference (CEDEC) last year. The talk was given by two Polyphony graphics engineers: Yu Chengzhong and Hajime Uchimura.

Yu joined Polyphony Digital in 2024 after graduating from Tokyo University of Science, where he presented a technical paper on real-time volumetric rendering at SIGGRAPH 2023. Hajime is a longer-tenured engineer who has been with the studio since 2008 and is responsible for much of the existing technology that NeuralPVS aims to improve, including GT7’s current precomputed occlusion culling system. He has also worked on the game’s color reproduction systems (particularly car body paint), and HDR image processing for the Scapes photo mode. GTPlanet readers may also recognize him as a co-presenter on the studio’s physically based tone mapping talk from SIGGRAPH 2025.

The presentation was originally delivered in Japanese, and you can download the original slides here. We translated it and digested the technical information as best we could to help determine what it could mean for the future of Gran Turismo.

Of course, Polyphony Digital has a history of sharing its technical work at CEDEC and other academic conferences, and it always offers a fascinating and rare look behind-the-curtains at how the secretive studio’s custom technologies actually work. Past presentations have covered topics ranging from the “Iris” ray tracing system used in GT Sport, to its circuit scanning and course creation methods and procedural landscape generation techniques.

How Rendering Works Now

Every frame Gran Turismo renders contains thousands of objects: buildings, trees, grandstands, barriers, track surfaces, and everything else that makes up a course environment. But, at any given moment, only a fraction of those objects are actually visible to the player. Some are behind the camera, some are off to the side, and some are hidden behind other objects in the scene.

Drawing all of those invisible objects would be a waste of processing power. So the game uses a process called “culling” to figure out which objects can be skipped. The better the culling, the less work the CPU and GPU have to do, which means more stable frame rates and potentially more room for visual detail.

Gran Turismo 7 currently uses a precomputed culling system. Before a course ships, Polyphony’s tools render the track from thousands of camera positions along the driving surface, recording which objects are visible from each spot. Those results are stored as visibility lists (internally referred to as “vision lists”) that the game looks up at runtime.

To keep the data manageable, the system clusters those thousands of sample points into a smaller set of zones using a mathematical technique called Voronoi partitioning. At runtime, the game figures out which zone the camera is in and uses that zone’s visibility list to decide what to draw.

Where The Current System Falls Short

This clustering approach works, but it has some inherent limitations.

The boundaries between zones are hard lines, which means visibility can only change in abrupt, discontinuous jumps as the camera crosses from one zone to the next. Those boundaries don’t always line up neatly with the actual geometry of the course, either, which can lead to objects popping in or out at moments that don’t look natural.

The number of zones is also a manual tuning parameter. Too few and the culling is too coarse. Too many and the data gets unwieldy. It’s a balancing act that has to be revisited for each track.

Neural Networks to the Rescue!

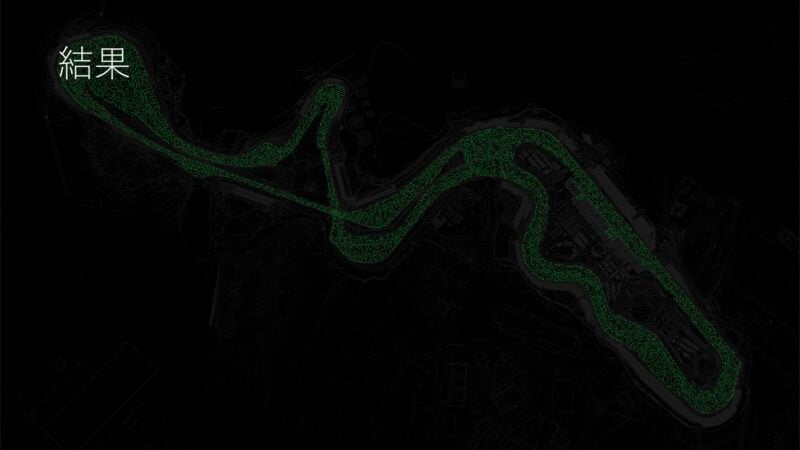

NeuralPVS replaces that zone-based lookup with a neural network that learns the relationship between a camera position and which objects should be visible. Instead of snapping to the nearest precomputed zone, the network takes the camera’s exact coordinates and outputs a visibility prediction for every object in the scene.

The result is a smooth, continuous visibility field rather than a patchwork of discrete zones. Objects transition in and out of visibility gradually as the camera moves, rather than flipping on and off at arbitrary zone boundaries.

The approach was inspired by NeRF (Neural Radiance Fields), a technique from the research community that uses neural networks to represent 3D scenes. Polyphony’s team recognized that their visibility mapping problem (position in, visibility out) had a structurally similar shape and adapted the concept.

Each course is divided into regions, and each region gets its own small neural network. The team tested several network architectures and settled on one using Fourier Feature Mapping, which maps input coordinates into a higher-dimensional space before processing them. This gave the best balance of accuracy and speed.

Seeing Terrain

One of the more interesting details from the presentation is how the neural network handles course geometry that the current system struggles to fully exploit.

On a course like Eiger Nordwand, which is wide open, the existing culling system has few large structures to work with, leaving the course’s own terrain as the primary source of occlusion. Although the precomputed rendering step detects when terrain features like hills and ramps block visibility from specific camera positions, the problem is what happens next.

When those thousands of individual data points get merged into broader zones, the system has to be conservative: if an object is visible from any position within a zone, it stays on that zone’s visibility list. A hill might block your view of a building from one spot, but if another spot in the same zone can see that building, it gets drawn anyway. The finer details of what the terrain hides get lost in the merge.

The neural network doesn’t have this problem. Because it predicts visibility for exact camera coordinates rather than broad zones, it can preserve the occlusion effect of terrain features at the precise positions where they actually matter.

Making It Fast

A neural network is only useful for culling if it’s fast enough to run every frame without eating into the performance gains it creates. Polyphony’s team addressed this with an aggressive quantization pipeline: network weights are compressed from 32-bit floating point down to 8-bit integers, and the inference code is hand-optimized to use SSE instructions on the CPU.

The quantization reduced data sizes by an average of 260% while maintaining culling quality, and the optimized inference path brought per-query times down to roughly 33 microseconds on average across the courses they tested. That’s fast enough to be a non-factor in the frame budget.

All of the training is done offline on CPUs (the networks are small enough that GPU training is actually slower), and the process is parallelized across clusters. The system is designed to be largely automated, which matters when you’re building culling data for a game with over 30 tracks.

PS5 Benchmarks

The presentation included benchmark data from PlayStation 5 running on two courses: Eiger Nordwand and Grand Valley.

On Eiger Nordwand, average CPU frame time dropped from 3.944ms to 3.758ms with NeuralPVS enabled. GPU improvements were smaller (averaging 0.026ms), which makes sense given that Eiger is a course with relatively few occluders. The gains come from the network learning to use terrain features that the existing system overlooks.

Grand Valley showed more dramatic results. CPU average dropped from 4.552ms to 4.256ms, and the CPU maximum dropped from 6.378ms to 5.849ms, a reduction of over half a millisecond. GPU load was also more stable across the course, with the maximum GPU time dropping by nearly 0.1ms.

Half a millisecond might not sound like much in isolation, but in a 60fps rendering pipeline where every frame has a 16.67ms budget, shaving time off the CPU and GPU means more headroom for everything else. That could translate to more objects on screen, better lighting, or simply more consistent frame pacing in demanding scenes.

What It Means for Gran Turismo’s Future

Although the presentation was held last year, to the best of our knowledge NeuralPVS is not live in Gran Turismo 7 yet.

The presenters were explicit about this at the time, stating they are “considering introducing this into the product going forward”. That phrasing leaves open whether it arrives as a GT7 update, as part of a future title, or both. Of course, even if it did arrive in GT7, such a highly technical feature would almost certainly not be disclosed in patch notes for the general public.

It’s clear, though, that the system is well past the research stage. The full pipeline is built, it’s been benchmarked on real courses running on real PS5 hardware, and the team described it as “almost fully automatic”. That last point is significant: an automated system scales much more easily across a large track roster than one that requires per-course manual tuning.

The presentation also noted that the system is designed to handle the “high-definition, high-load models” of recent courses. As Polyphony continues to add more detailed environments (whether to GT7 or its successor), the rendering budget gets tighter. A smarter culling system that can squeeze out additional performance without any visual compromise is exactly the kind of behind-the-scenes technology that keeps the series looking and running the way players expect.

Polyphony doesn’t talk publicly about its technology very often, so the fact that two engineers presented this work at CEDEC is notable in itself. It’s a signal that the studio sees AI-assisted rendering as a meaningful part of Gran Turismo’s technical direction, not just an experiment.

See more articles on CEDEC and Polyphony Digital.