A new paper posted to the arXiv research repository has demonstrated the possibility of teaching AI how to race in Gran Turismo using only the same visual cues as human drivers.

The research, conducted by Sony AI — a recently created subsidiary of Sony to study applications for AI — follows up on a similar study from August 2020, but with some key differences.

In the previous study, the authors used a reinforcement learning method to teach an AI to go as fast as it possibly could based purely on its physical interactions with the environment. It learned what paths resulted in collisions and slower lap times, and eventually achieved “super-human” performance, beating even the best Gran Turismo Sport drivers over a lap.

The new paper focuses on visual cues instead. In essence the paper’s lead author, Ryuji Imamura, proposes that the information conveyed while driving — the track itself, the head-up display, and force feedback — are sufficient for a human driver to learn how to drive quickly, and thus should be enough for an AI to learn to drive at human speeds.

While the methodology is a little tricky to follow, it ultimately consisted of a process of running an AI driver from an initial “seed” lap through several hundred batches (or “epochs”) of laps, with the AI agent observing its laps through a capture device and from speed and acceleration data provided through the console itself.

After each set of tests, the new data would be analyzed and used to generate new control outputs for the next set.

The lap time data for each epoch was compared to a batch of 28,000 user-generated lap times held by Polyphony Digital for the same event, which was an arcade mode time trial at Tokyo Expressway Central Outer Loop in the Mazda Demio XD Touring ’15. This was the same dataset used in the previous “super-human” AI study.

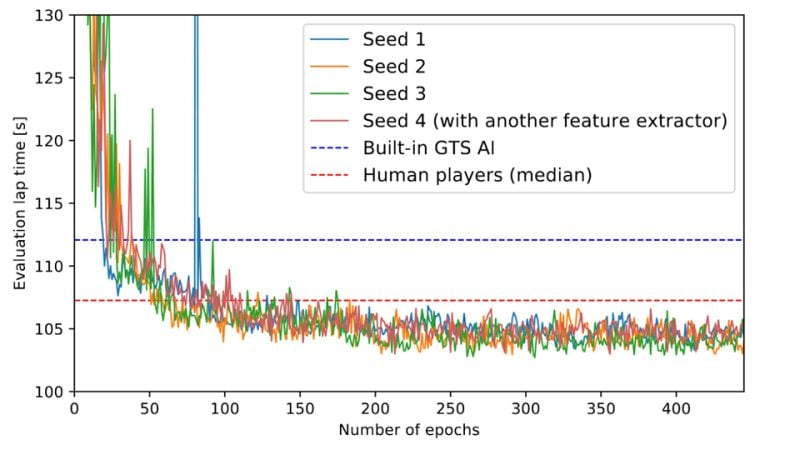

As you can see the visual learning AI quickly beat the lap times generated by GT Sport’s own AI, getting to that mark in around 20-30 epochs — equivalent to 350-500 laps.

It didn’t take much longer to exceed the median lap time (the value that separates the bottom 50% of times from the top 50% of times) of the human players either, but improvements seem to stagnate at around 200 epochs, or about 3,500 laps.

Ultimately, by using more-or-less the same cues as a human player, the visual learning AI managed to achieve a lap time within the top 10% of players, at around 3.3 seconds slower than the fastest human, 4.5s faster than the median human, and a whopping 9.5s faster than GT Sport’s own AI.

The fact that this is the second study we know of that Polyphony Digital has participated in which looks at ways to use machine learning to improve AI driver times is good news. It suggests that PD is aware of the limitations of its current AI and is seeking to make it better.

With Gran Turismo 7 looking to return to a more traditional format, with offline races featuring AI, that would make for a more satisfying user experience.

Although it has yet to be peer-reviewed and published, you can read the paper in full here.

See more articles on Artificial Intelligence.