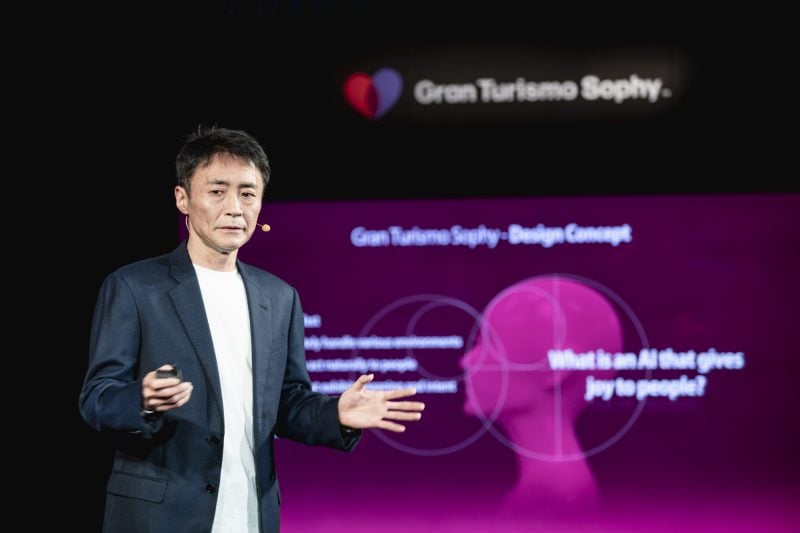

Polyphony Digital recently unveiled Gran Turismo Sophy, a new artificial intelligence driving system which will appear in Gran Turismo 7. The technology was developed in collaboration with a 25-person team at Sony AI, utilizing the latest advancements in machine learning. The team’s research was published in Nature and GT Sophy was tested against (and defeated!) some of the best Gran Turismo drivers in the world at a live event in Tokyo last year.

However, the revelation of GT Sophy raised nearly as many questions as it answered. How exactly does the technology work? How will it actually be integrated into GT7, and what kind of limitations does it have?

To help answer all of these questions, we studied the Nature publication and spoke with Gran Turismo series creator Kazunori Yamauchi and Director of Sony AI America, Peter Wurman, in an exclusive interview. Here’s what we learned.

How Sophy Actually Works

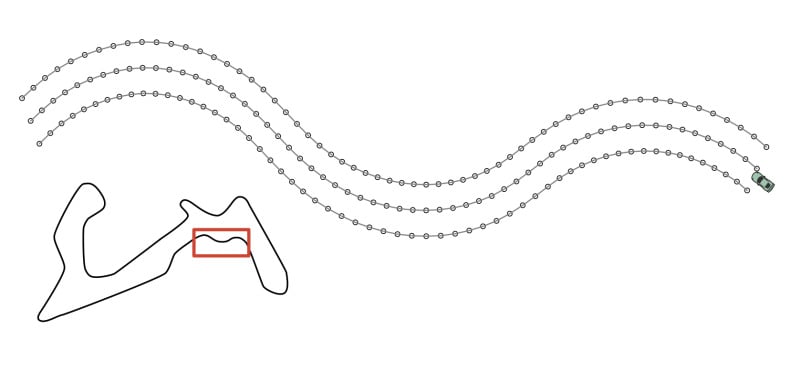

As a “player”, Sophy sees Gran Turismo’s virtual environment as a static map, with the left, right, and center lines defined as 3D points. The track ahead of Sophy is divided into 60 equally-spaced segments, with the length of each segment calculated dynamically by the car’s speed. Each segment represents approximately the next 6 seconds of travel at any given time.

Sophy also has access to certain information about what the car is doing in its environment, including three-dimensional velocity, angular velocity, acceleration, the load on each tire, and tire-slip angles. It is also aware of the car’s progress along the track, the incline of the track surface, and the car’s orientation to the track’s center line and edges ahead. Sophy is notified by the game if the car makes contact or travels outside the game’s default track boundaries.

In terms of controls, Sophy only has access to acceleration, braking, and left/right steering inputs. It can only modify these inputs at a rate of 10Hz, or roughly every 100 milliseconds. It does not have access to gear shifting, traction control, brake balance, or any other parameters typically available to human players.

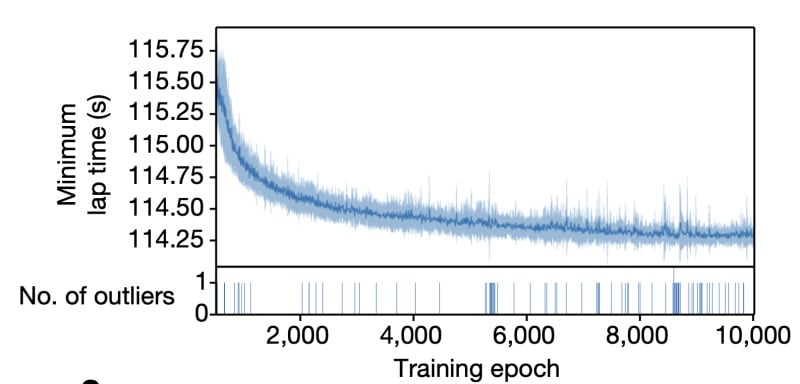

Sophy is presented with these environmental variables and limited inputs, then it gets to work. Using advanced “machine learning” algorithms, it drives the track over and over again. It is “rewarded” — mathematically speaking — by progressing around the track in as little time as possible, and “punished” — again, mathematically speaking — if it makes contact with the walls, other cars, or drives out of bounds.

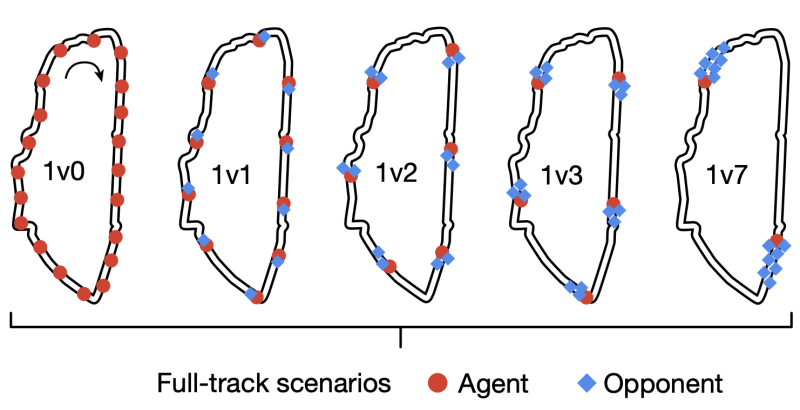

“GT Sophy was trained using reinforcement learning,” Sony AI America director Peter Wurman explained. “Essentially, we gave it rewards for making progress along the track or passing another car, and penalties when it left the track or hit other cars. To make sure it learned how to behave in competitive racing scenarios, we put the agent into lots of different racing situations with several different types of opponents. With enough practice, through trial and error, it was able to learn how to react to the other cars. There was a very fine line between being aggressive enough to hold your own driving line and being too aggressive and causing accidents and getting penalties.”

Wurman went on to describe the most difficult challenges of actually getting the data processed. “The hard part was figuring exactly how to present that information to the neural networks in the most effective way. For instance, through trial and error, we found that encoding about 6 seconds of the upcoming track was enough information for GT Sophy to make decisions about its driving lines,” he explained. “The other big challenge was balancing the reward and penalty signals to produce an agent that was both aggressive and a good sport.”

Sophy does all of this in real time, on real PlayStation 4s running a special version of Gran Turismo Sport which reports the required positional data and accepts control inputs via network connection. Sophy’s code is executed by servers which communicate with the PlayStations via the network. To help speed up the process, Sophy controls 20 cars on track at the same time. The results are fed into servers with NVIDIA V100 or A100 chips, server-grade GPUs designed to process artificial intelligence and machine learning data.

It is important to note this type of computing power is only needed to “create” Sophy, not run it. The machine learning process eventually results in “models” which can then be executed on more modest hardware.

“The learning of Sophy is parallel processed using compute resources in the cloud, but if you are just executing an already learned network, a local PS5 is more than adequate,” Kazunori Yamauchi explained. “The asymmetry of this computing power is a general characteristic of neural networks.”

How Sophy is Different

Artificial intelligence in racing games has long been a sort of “black box”. How it actually works is something rarely discussed by game developers, yet it is an important part of racing games that all players interact with. We were curious to know more about how AI has worked in Gran Turismo in the past and exactly what makes Sophy different.

As Kazunori Yamauchi explained to us, the machine learning process provides Sophy with more rules of behavior than human programmers could possibly devise, but that strategy comes with its own drawbacks as well.

“The AI up until now was rules-based, so it basically runs as an if-then program,” Yamauchi-san explains. “But no matter how many of these rules are added, it can’t handle conditions and environments other than those defined. Sophy, on the other hand, generates a massive amount of implicit rules that humans can’t handle, within its network layer. As a result it is able to adapt to various conditions and environments. But because these rules are implicit, it means that it’s not possible to make it learn ‘a specific behavior’ that is simple for a rule-based AI.”

How Sophy Will Appear in Gran Turismo 7

Although Sophy has been in developed over the past few years using Gran Turismo Sport, the technology will actually make its debut in Gran Turismo 7 in a future update to the game. Kazunori Yamauchi’s announcement was light on details, so it was something we were eager to ask him about.

“Sophy will probably appear in front of the player in three forms,” Yamauchi-san explained. “As a teacher that will teach driving to players, a student that will learn sportsmanship from players, and as a friend to race with. I wouldn’t rule out the possibility of a B-Spec mode, where the player is the race director and Sophy is the driver.”

Sophy could also be used as a tool by the game itself. “By principle it is possible to use Sophy for the BOP settings,” Yamauchi added. “If it’s just to align the lap times of different cars, it’s already possible to do that now. But because the BoP settings aren’t just about lap times, we wouldn’t just leave it all to Sophy, but certainly she will be a help in the creation of BoPs.”

Sophy’s Still Learning

As soon as Sophy was revealed, we were curious to learn more about its limitations. The Sony AI team is very much aware of how Sophy can improve and the technology itself is still in active development.

For example, in its current iteration, Sophy is trained on specific tracks in specific conditions, but the team expects the technology will be able to adapt. “These versions of GT Sophy were trained for specific car-track combinations,” Wurman explained. “Enhancing the agent to drive equally well with tweaks to the car’s performance is part of our future work. This version of GT Sophy was also not trained for environmental variations, but we expect the techniques will continue to work under those conditions.”

As Sophy was debuted as a super-human driver that was capable of defeating the best Gran Turismo players in the world, questions and concerns immediately arose about its ability to adapt to less competitive human drivers.

According to Peter Wurman, Sophy can adjust by literally driving like a newer driver instead of just artificially slowing down. “This is also part of our future work,” the Sony AI America Director explained. “Our goal is to create an agent that, when in a ‘slowed’ mode, is driving like a less experienced driver, rather than being handicapped in some way, like arbitrarily speeding it up or slowing it down in violation of the physics.”

Sony AI’s initial goal was to develop the fastest and most competitive AI possible, which they can then build upon to develop a more general-purpose tool that makes the game more enjoyable for everyone. “Our goal in this project was to show that we could make an agent that could race with the best players in the world. Our ultimate goal is to create an agent that can give players of all kinds an exciting race experience,” Wurman confirmed.

More Details

The research and development that goes into today’s video games — and especially Gran Turismo titles — is typically closely-guarded as trade secrets. This makes the transparency of Sophy’s development all the more refreshing and incredibly fascinating for those interested.

If you want to dig deep and learn more about Sophy’s inner-workings, you can read the full peer-reviewed research paper in the February 10, 2022 issue of the Nature scientific journal. The article and abstract is available to download with a subscription. For free access to Nature, check your local library or university.

We are sure to learn more about Sophy after the GT7’s release on March 4, 2022. As always, we’ll keep a close eye on any news as it is revealed. Stay tuned!

See more articles on Gran Turismo Sophy and Kazunori Yamauchi.